By Andreas Schleicher, OECD Director for Education and Skills

Generative artificial intelligence (GenAI) has quicky embedded itself across the world. In school environments, students now consult chatbots for homework and teachers use apps to create lesson plans. This revolution has happened in a remarkably short time frame: ChatGPT was only released in late 2022. But the potential for the technology has fueled its rapid expansion.

Unlike earlier waves of education technology, much of GenAI is freely accessible to anyone with an internet connection. The user experience is intuitive, with no prior training or coding skills required. And the technology’s versatility means it can support a wide range of tasks, from drafting essays to creating learning experiences, all within seconds.

Given GenAI’s capabilities, its rapid take up across education settings is unsurprising. The OECD’s Digital Education Outlook 2026 argues there are many opportunities but also some risks related to GenAI. GenAI tools can support learning when guided by clear teaching goals or designed specifically for education. However, when AI removes the productive struggle essential for learning, students may complete tasks faster and achieve better immediate results, but their understanding may be less deeply consolidated. This can diminish cognitive stamina, deep reading, sustained attention and perseverance. Without a clear pedagogical purpose, GenAI can foster what researchers call “metacognitive laziness” and disengagement.

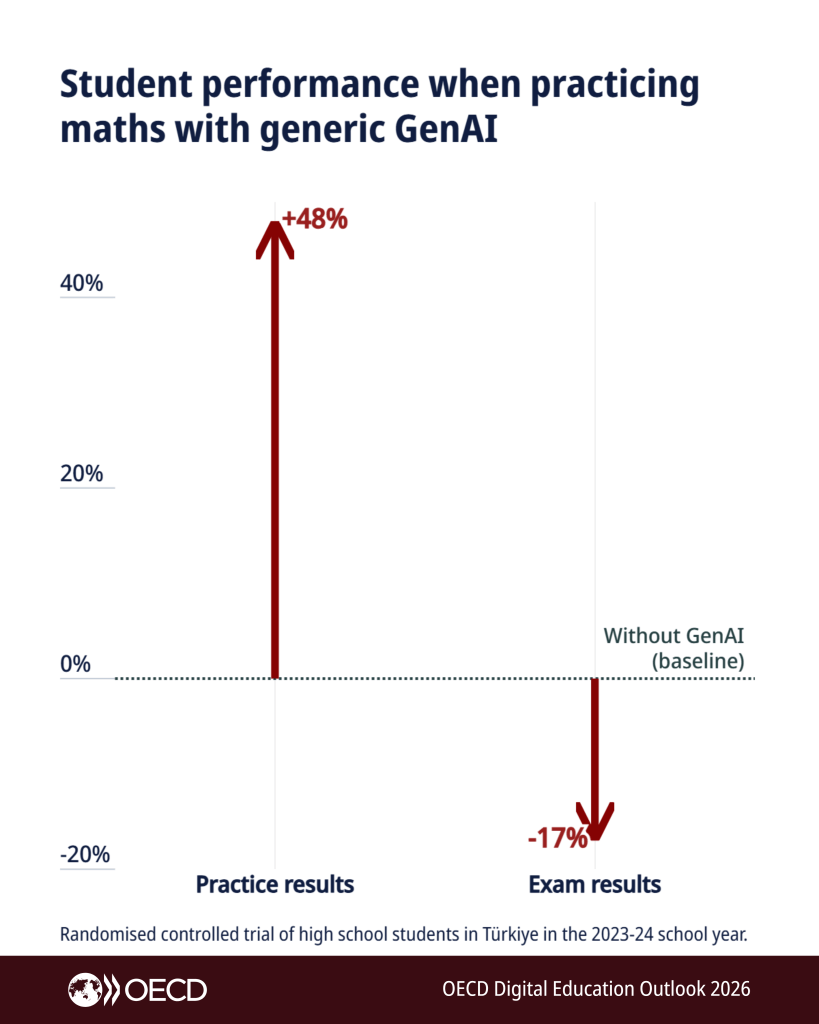

The Outlook also reveals limitations of non-education specific GenAI tool use for learning. For example, several research studies show that students improved the quality of their responses when studying with a general purpose GenAI tool compared to those with no access. However, their performance in exams did not reflect this improvement and got worse.

In contrast, even though using general-purpose GenAI tools pedagogically can work, specialised GenAI tools that are purpose built for learning show more promise. These are designed with clear pedagogical intent and grounded in the science of how people acquire knowledge and skills. Evaluations show that these tools can often lead to better learning outcomes. For example, when used as a creative or collaborative learning partner, or as a virtual research assistant.

Early trials suggest that GenAI-powered tutoring assistants can increase human tutors’ capacity to help students solve problems. According to one study, less-experienced tutors assisted by GenAI used better tutoring strategies and saw student mastery of mathematics topics improve significantly. Researchers found that another interactive chat-based teacher training tool, which enables novice teachers to practice teaching with simulated students, led to better teacher preparedness and confidence. While these studies show promise, future research is needed to assess their true effectiveness in different educational settings.

Looking ahead, GenAI is clearly not a magic wand that will solve all of education’s problems. It is capable of magnifying good pedagogy and bad. Governments need to ensure that GenAI is used with intent, to enrich learning and not replace cognitive effort or reduce teacher professional judgement. Education systems should preferably back tools explicitly designed to enhance teaching and learning, co-created with teachers and students, and scrutinised with rigorous trials. By doing so, governments can ensure that GenAI supports teachers and students worldwide and equips learners with essential GenAI literacy skills that will likely be important for success in the future labour market and in life more broadly.